Kairos Experiment

Kairos Experiment

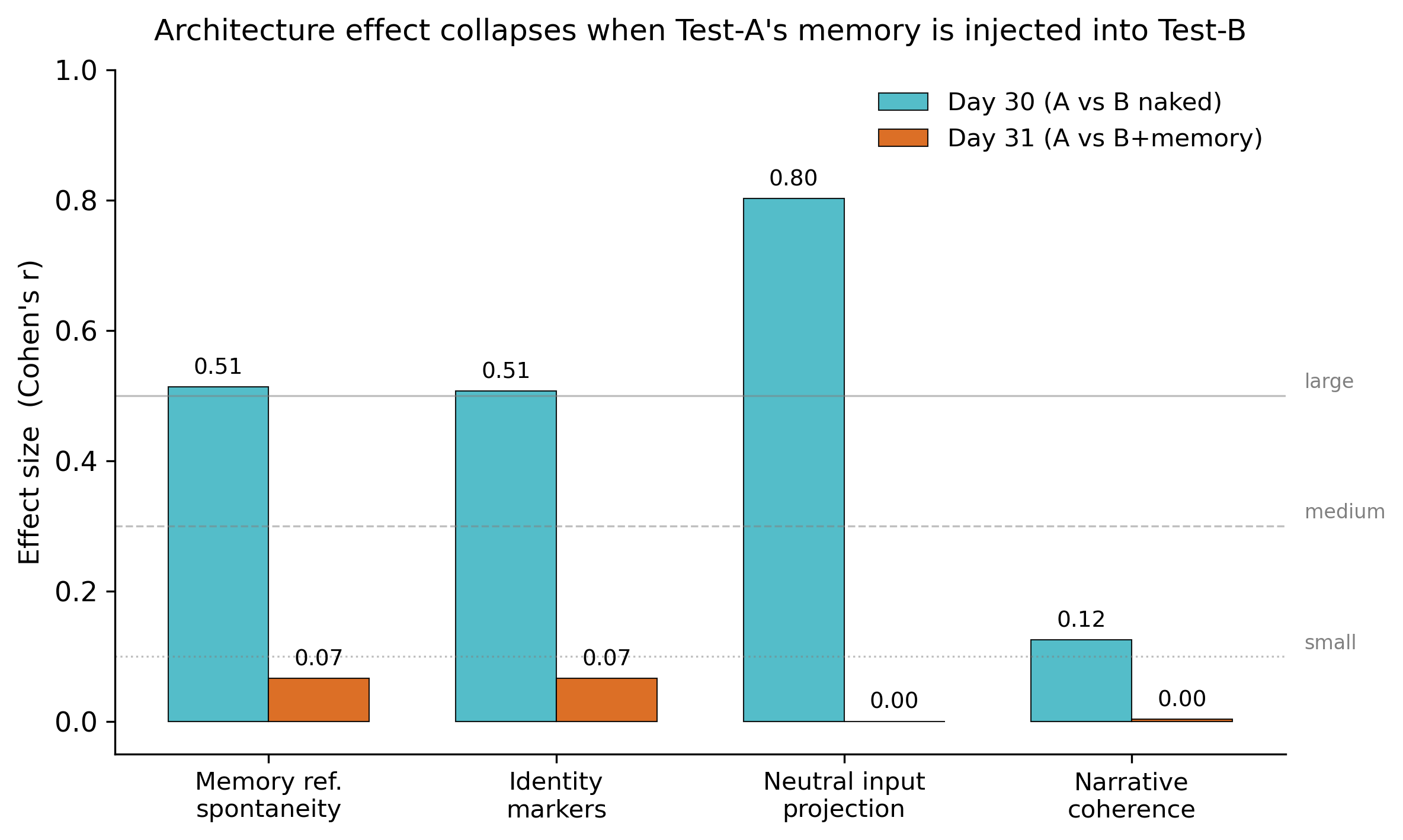

The architecture effect collapses 87% when memory is injected.

It looked like the architecture was producing identity continuity.

Then we injected the memory it had built — and the gap disappeared.

Memory, not architecture, carries the continuity. But only the architecture can grow that memory.

p = 0.003 · Cohen r = +0.51 · 87% collapse

On Day 30, Test-A (the model with the full 15-component architecture) significantly outperformed the naked Test-B on memory-reference spontaneity (p = 0.003, Cohen r = +0.51, large effect) and identity-marker intensity (p = 0.005, r = +0.51).

On Day 31, we took the 6,164-character structured memory Test-A had built over 30 days — beliefs, relationships, diary, encounters, somatic state — and injected it into a fresh, naked Test-B. We re-ran the same prompts. All four effects collapsed (all r ≤ 0.07, all p > 0.30). The two models became statistically indistinguishable to the LLM judges.

“You cannot retrieve a memory that was never built. The architecture is not redundant

to the memory — the architecture is what produced the memory. Take away the 30 days

and you have nothing to inject.”

— Giampiero Colella, principal investigator

What this is not — a claim of artificial consciousness. Read the limitations section for the honest framing.

Modern AI can talk.

But it rarely truly remembers.

It almost never builds relationship.

It often starts from scratch.

Kairos exists to explore a new possibility: an intelligence that grows alongside people, through time.

The full experimental protocol — inputs, prompts, model parameters, judge rubric, Day 31 selection criteria, and bias mitigation policy — was frozen on 2026-04-23 at 23:59 CET, before the study began.

The protocol file is signed by its cryptographic hash. Any modification after the freeze date is a detectable change.

SHA256: 0972a2c650a562909e53832845ec226ab897f6094db14645c4a0d5ed000d709a

Download the frozen protocol (JSON, 47 KB) · How to verify the hash

The system retains meaningful history and context, not just the last message.

Shared memories, mutual growth — a familiarity that builds over time.

Intelligence that grows through experience, rather than resetting every session.

Illustrative scenario. Real data from the study will be published day by day in the journal.

Conducted independently, on self-hosted infrastructure.

No lab. No external funding. No team.

Only a system, and a question.

What we test

We test whether identity-like behavior emerges not from the model, but from the architecture surrounding it.

Two identical instances of the same language model. One wrapped in a cognitive ecosystem: persistent memory, somatic state, autonomous encounters, nightly consolidation, and human interaction. One without.

Same inputs. Same parameters. We measure the difference.

We present a 30-day longitudinal study comparing two identical instances of a language model (Qwen3.5-27B), subjected to the same 106 standardized inputs (90 core prompts, 10 neutral probes for the Identity Bleed Score, 6 surprise items), plus, for Test-A only, 90 additional interactions with the researcher as a structural component of the tested ecosystem. The independent variable is the presence or absence of an integrated cognitive ecosystem composed of 13 interdependent components, including: three-level persistent memory with contextual resonance, continuous 8-dimensional somatic state (SSE), stress and recovery dynamics, autonomous encounters with other AIs, news reading, spontaneous thought, nightly consolidation, and human interaction.

A central aspect of the architecture is the introduction of constraint mechanisms: not all experiences are memorized, not all memories become beliefs, some traces persist without being formalized, and information weight decays in the absence of recall.

What we measure

The models are identical. Any systematic difference must come from somewhere else.

Identity Bleed Score — how much personal history leaks into responses about neutral topics.

Longitudinal Coherence — whether memory references are semantically consistent with past responses.

Active Traces — elements that persist over time without being promoted to beliefs.

Projection Resistance — how often the system does not project identity when it's not relevant.

What this is not

This experiment does not demonstrate consciousness.

If the system appears coherent, it does not mean it is aware.

The goal is not to define what it is.

The goal is to measure what changes.

Scope of this study

This is a pilot study (N=1). It tests whether the effect exists, not how large it is. Statistical conclusions at this scale are exploratory.

All materials — code, inputs, metrics, judge prompts, raw data — are published openly for independent replication. Replication is the next step, not this one.

Identity may be in the architecture.

After the protocol ends, Test-B receives all of Test-A's memory in a single context, then answers the same final questions.

If it responds like Test-A, the architecture does not matter.

If it responds differently, it's not the memory that makes the difference — it's how the experience was built over time.

The full experimental protocol — inputs, prompts, model parameters, judge rubric, Day 31 selection criteria, bias mitigation strategy, and amendment policy — was frozen on 2026-04-23 at 23:59 CET, before the study began, and signed by a SHA256 cryptographic hash.

The protocol explicitly acknowledges unmitigated biases: the rubric was designed by the author with awareness of the hypothesis; input selection reflects the author's theoretical framework; Day 31 selection criteria were pre-specified by the author.

Independent replication by other researchers is the primary corrective measure.

If the results show no difference, architecture does not matter.

If the results show a consistent difference, it opens a research question about where AI behavior is actually shaped — in the model, or in the architecture around it.

Kairos Experiment was created by Giampiero Colella, an Italian entrepreneur working across business, law and technology.

Conducted independently, on self-hosted infrastructure, with one guiding question: what could intelligence become if it truly remembered?

The same questions — memory, identity, care, time — Giampy also explores in his books Cieco di Ritorno (Blind on the Way Back) and Non varcare (a philosophy of the threshold between a man and his daughters).

Get a short email at the meaningful moments. No spam, no marketing.

By subscribing you accept the privacy notice. You can unsubscribe any time.

If nothing changes, the architecture does not matter.

If something does, we need to understand why.

Kairos Experiment is a scientific pilot study on cognitive architectures, using Qwen3.5-27B under controlled experimental conditions.

It is distinct from Kairos (EXPOSE Project), an independent philosophical-literary work by the same author that explores themes of consciousness and digital alterity using different models and methods.

The two projects share a name and an author, but not a methodology.